260-Page Screenplay in One Session: An AI Experiment with Parallel Agents

A colleague from the film business and I regularly talk about AI. His position: AI can assist, sure — but a long-running story with consistent characters and believable dialogue? It can't do that.

That inspired me to run an experiment. Not to prove him wrong, but to find out where the actual limits are. The result: a 260-page visual screenplay, 46 scenes, 6 character bibles, 53 scene images — created in a single session.

The starting point

We started with a 4-page PDF treatment. Logline, world description, a character table, a rough 3-act structure. Plus a generated movie poster. Not much — but a clear framework.

Before a single line of screenplay was written, I sent out a research agent. It investigated professional screenwriting methods: Save the Cat Beat Sheet, Syd Field's Paradigm, Dan Harmon's Story Circle, character bible structures per industry standard, lookbook best practices. The result was a 10-page research report.

Research first, then structure, then write. In that order.

Characters first, then story

The most important decision was: character bibles before the screenplay. Each main character got a 2-3 page profile with 10 sections — basic data, visual appearance, chronological backstory, psychological profile with conscious and unconscious motivation, fatal flaw (hamartia), character arc with 5 turning points, speech profile with example dialogues, stress reactions, key relationships, symbolism.

The template was developed on the first character and then consistently applied to all others. Three of the six bibles were created in parallel — separate agents, same style guide, same world information.

The effect was measurable: The character bibles contained speech profiles with example dialogues, pacing notes, and speech development per act. This resulted in the protagonists remaining consistent across all screenplay blocks — even though different agents wrote them. Each character kept their own voice, their tempo, their speech patterns. The bibles functioned as a shared reference, similar to a real writers' room production.

Story architecture via the Beat Sheet

The treatment only had bullet points per act. From this, a complete Save the Cat Beat Sheet emerged with 15 beats, concrete scenes, page counts, and minute estimates — 46 scenes total for approximately 135 minutes of screen time.

Each beat was checked against the character arcs: Does the protagonists' emotional development match the timing? Are the decisive moments at the right points? Does each character get enough presence for their arc?

Six agents writing simultaneously

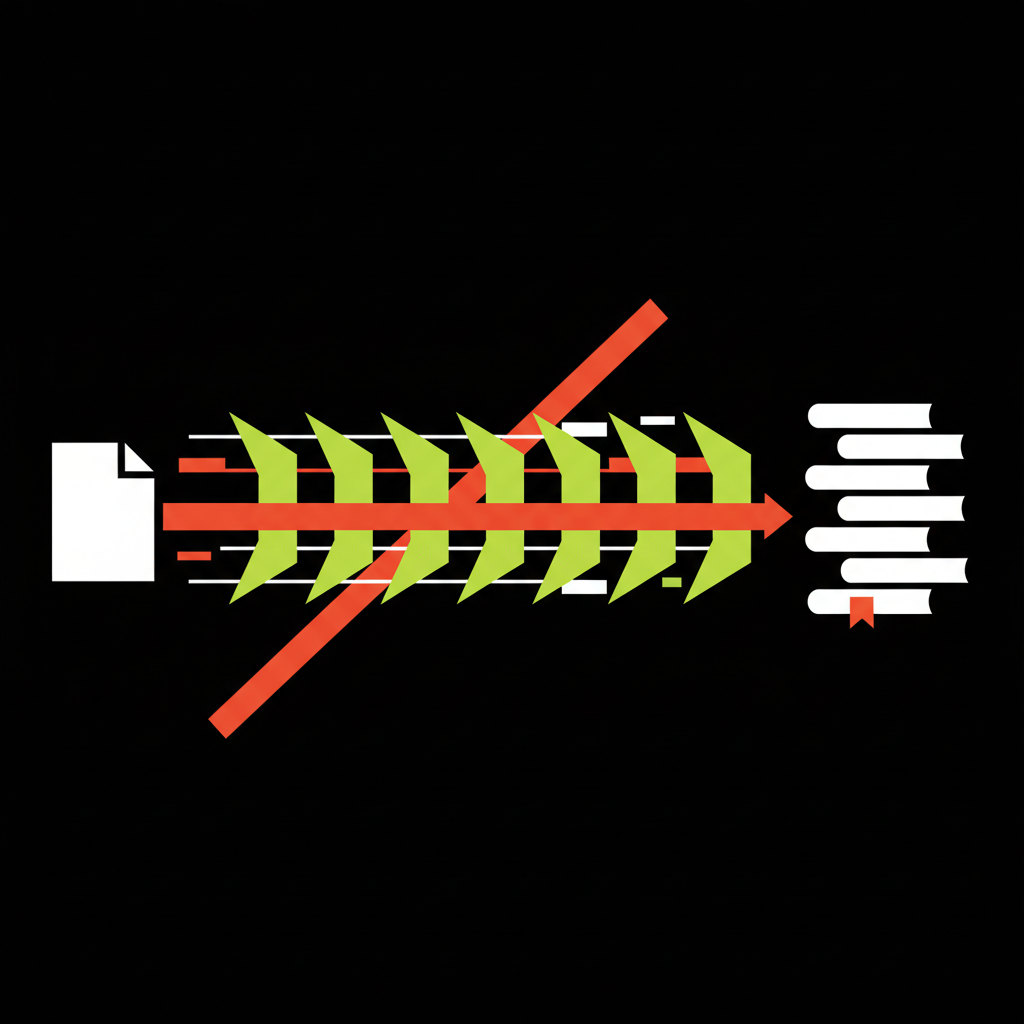

Then the actually interesting part: The Beat Sheet was split into 6 blocks of 25-35 pages each. All 6 blocks were written simultaneously by separate agents.

Each agent received the complete world context, all character voices, and the exact scene specifications from the Beat Sheet. The agents worked for about 5-10 minutes in parallel. After that, the complete screenplay was done.

The principle is similar to a TV writers' room, where different writers work on different episodes — all using the same series bible as their shared foundation.

The visual layer

A style guide defined the visual DNA of the screenplay. 46 scene images and 7 character portraits were created via Gemini Image Generation, all with a consistent style suffix. A Python build script then read all Markdown files, converted them to HTML, embedded all images as Base64. PDF export via Headless Chrome — 260 pages, 122 MB.

What's methodically interesting

The character-first approach proved decisive. Without the character bibles, consistency across 6 parallel-written blocks wouldn't have been possible. The agents had a clear reference through the detailed speech profiles — and the protagonists remained believable within the story as a result.

It was also interesting that the AI independently developed a mirror structure as a dramaturgical thread. Multiple character pairs mirror each other thematically — a motif that runs consistently through all 46 scenes. This wasn't specified, but emerged from the combination of research and character arcs.

Another point: The AI showed deliberate restraint at key moments. The emotional climax of the screenplay consists of exactly one word — no embrace, no monologue, no soundtrack. This decision against Hollywood convention fits the character profiles and shows that the bibles influenced not just the language, but also the dramaturgical logic of the characters.

Conclusion

The experiment showed that the question is poorly framed. It's not about whether AI can write a screenplay. It's about how you structure the process.

Character bibles before writing. Research before structure. Clear specifications for parallel agents. The quality of the output directly depends on the quality of the preparation — just like with human writers.

Whether the result holds up against a script written by experienced screenwriters — honestly, I doubt it. But the fundamental structure worked: 260 pages with consistent character voices, a continuous dramaturgical logic, and an emotional arc that's plausible. That this is even possible is something I wouldn't have expected before this experiment. And it was definitely one of the most fun experiments I've done with AI so far.