When AI Reads Binary — How Language Models Are Changing Vulnerability Research

AI systems are systematically finding vulnerabilities in compiled software — faster and broader than human experts. What this means for IT security.

In October 2024, Google's security team Project Zero published something that caused a stir in the security community: an AI agent called Big Sleep had found a previously unknown vulnerability in SQLite — an exploitable stack buffer underflow. Google called it "the first public example of an AI agent finding a previously unknown exploitable memory-safety issue in widely used real-world software."

That was a year and a half ago. Since then, the situation has escalated significantly. What was a single research success back then is now a systematic process. AI systems are finding security vulnerabilities in software considered well-audited for decades — faster, broader, and deeper than human experts ever could.

What Has Changed

Software analysis was until recently the domain of highly specialized experts. Anyone wanting to find vulnerabilities in compiled software — binaries without source code — needed deep knowledge of assembly, operating system internals, memory management, and reverse engineering. These are skills acquired over years. The number of people worldwide who master this at a high level is limited.

That's exactly what's changing. Large language models can now read compiled code, recognize patterns, and identify potential vulnerabilities. Not perfectly. Not without errors. But at a speed and breadth that's incomparable to manual analysis.

The Numbers

A few concrete data points to make this tangible.

Google OSS-Fuzz has been using AI-generated fuzz targets since 2023. Result: 26 new vulnerabilities in projects that had already undergone hundreds of thousands of hours of conventional fuzzing. The most notable: CVE-2024-9143 in OpenSSL — an out-of-bounds read/write that researchers estimate had existed in the code for about 20 years and would not have been discoverable with human-written fuzz targets. The AI-generated targets improved code coverage across 272 C/C++ projects by over 370,000 lines.

AISLE, an AI-driven security research system, discovered twelve zero-day vulnerabilities in OpenSSL during fall and winter 2025, patched in January 2026. Three of them had existed since 1998 to 2000 — undetected for over 25 years, despite millions of CPU-hours of fuzzing and extensive audits by teams including Google's own security researchers. One of the vulnerabilities, CVE-2025-15467, a stack buffer overflow in CMS message processing, was rated critical by NIST with a CVSS score of 9.8. In five of the twelve cases, the AI system directly proposed patches that were accepted.

DARPA AIxCC: At the AI Cyber Challenge finals in August 2025 at DEF CON, seven finalist teams analyzed 54 million lines of code. The systems detected 86 percent of deployed synthetic vulnerabilities and automatically patched 68 percent of those found. In the process, 18 previously unknown real-world vulnerabilities were discovered.

And the overall trend: the number of AI-discovered CVEs rose from about 300 in 2023 to over 450 in 2024 and over 1,000 in 2025. That's an increase of roughly 70 percent per year.

Why Binary Analysis Is the Decisive Point

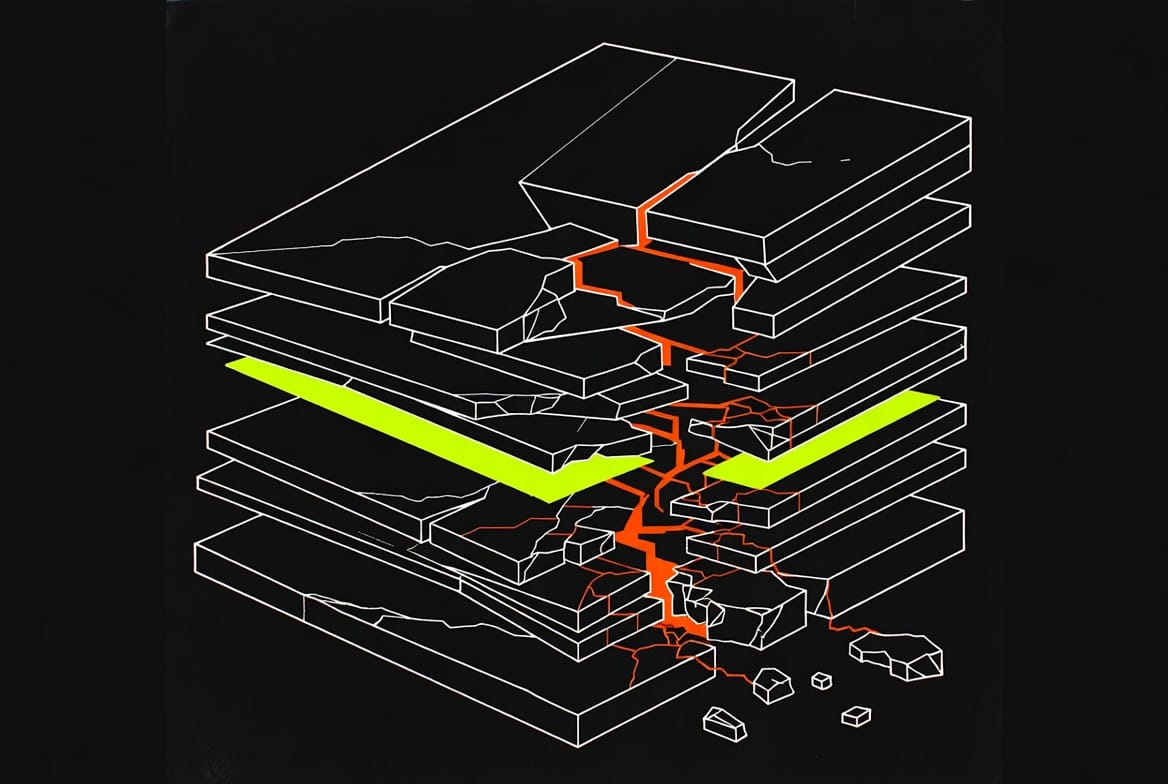

Source code analysis is one thing. But the real turning point lies in the analysis of binaries — compiled software where no source code is available.

A large portion of the world's critical infrastructure runs on software written in C or C++. Operating systems, network stacks, industrial control systems, embedded firmware. Roughly 70 percent of all software security vulnerabilities stem from memory-safety problems — buffer overflows, use-after-free, integer overflows. Exactly the kind of bugs that systemically occur in C/C++ code.

Much of this software has never been comprehensively audited for vulnerabilities. Not because nobody cares, but because manual binary analysis is extremely labor-intensive. An experienced reverse engineer needs days to weeks to thoroughly analyze a single component. That doesn't scale.

AI fundamentally changes this equation. Tools like LLM4Decompile translate x86 binaries back into readable C code. Frameworks like GhidrAssist connect the disassembler Ghidra with language models that can analyze decompiled code, name variables, and recognize vulnerability patterns. The LATTE framework performs automated taint analysis on firmware and found 37 unknown bugs, ten of which received CVE numbers.

What previously took weeks now runs in hours. What previously required deep expert knowledge becomes accessible through AI assistance to less specialized analysts.

The Dual-Use Problem

And here's where it gets serious. Because the same capabilities that help defenders also help attackers.

Bruce Schneier put it succinctly in October 2025: AI agents are now hacking "at computer speeds and scale." What was previously rare expert knowledge is becoming a commodity. The ability to systematically find vulnerabilities in software and generate exploits is no longer tied to years of experience.

This isn't a theoretical consideration. In July 2025, HexStrike-AI was released, an open-source tool connecting over 150 security tools with language models. Within twelve hours of release, it was discussed on the dark web and deployed against a Citrix NetScaler vulnerability. The window between a vulnerability's disclosure and the first exploit is shrinking. Schneier argues that the previously assumed time to patch systems after vulnerabilities become known no longer exists.

At the same time, the major tech companies are using the same technology defensively. Google deploys AI-powered fuzzing in OSS-Fuzz, Gemini automatically generates patches for sanitizer bugs. Meta published AutoPatchBench, a benchmark for AI-generated security patches. The seven DARPA AIxCC finalist teams released their systems as open source.

It's a race. And the decisive question is who's faster — attackers or defenders.

What This Means for the Future

From my perspective, there are three developments we should be watching.

First: Scale. Until now, vulnerability research was artisanal work. AI turns it into an industrial process. When a system like AISLE finds twelve zero-days in OpenSSL — one of the most thoroughly audited codebases in the world — the question isn't whether, but how many vulnerabilities are still dormant in less scrutinized software.

Second: Democratization. The barrier to entry for offensive security is dropping. This doesn't just affect nation-state actors, but potentially anyone with access to a capable language model and the right tools. The asymmetry between attacker and defender is shifting.

Third: Speed. AI-assisted exploit development compresses timelines previously measured in days or weeks down to hours or minutes. The assumption that there's enough time to patch after a vulnerability becomes known is increasingly questionable.

Conclusion

The ability of AI systems to systematically find vulnerabilities in compiled software isn't a future vision. It's happening now. Vulnerabilities that survived 20 or 25 years in production software are being found in weeks. Codebases that have undergone millions of hours of manual and automated testing are yielding new flaws under AI analysis.

This is simultaneously one of the most important developments for IT security and one of the greatest risks. The technology is dual-use in the purest sense: the same models that help defenders harden systems can help attackers break them open.

The decisive question is no longer whether AI can find vulnerabilities. It's whether we're fast enough to close the gaps before someone else exploits them.